Praxis: A Writing Center Journal • Vol. 15, No. 1 (2017)

FOCUSING ON THE BLIND SPOTS: RAD-BASED ASSESSMENT OF STUDENTS' PERCEPTIONS OF COMMUNITY COLLEGE WRITING CENTERS

Genie Giaimo

The Ohio State University

giaimo.13@osu.edu

Abstract

This longitudinal mixed-methods study assesses students’ perceptions of the writing center at a large (approximately 11,325 students) multi‑campus two‑year college. The survey was collaboratively designed, with faculty and student participation; it presents findings from 865 student respondents, collected by peer tutors‑in‑training. The study offers a baseline assessment (Fall 2014) of the writing center, prior to wide-sweeping changes in recruitment, staffing, and training models, as well as a post-assessment (Fall 2015) analysis of the changes in student knowledge of the WC and its purpose. It also offers data on the trajectory of student development in relation to number of sessions attended. In 2014, students’ experiences at the writing center were inconsistent; the poorly articulated mission of the WC adversely affected students’ knowledge scores, and the center’s reliance on editorial-like feedback, given predominately by adjunct faculty, contributed to inconsistent reportage in perceived learning by attended sessions. Many of these trends, however, reversed in 2015. This paper seeks to demonstrate the important role that RAD research can play in evaluating student learning within writing center contexts and articulating how and at what moments, and under what conditions, learning and development occurs in the student-writing center relationship. It also offers a replicable experimental method that researchers at other institutions can adapt and apply to their own institutional contexts and programmatic needs.

Introduction

There is incredible potential for community college writing centers to produce pertinent scholarship and to build research profiles based on replicable, aggregable, and data‑supported (RAD) studies. As Richard Haswell defines it, “RAD scholarship is a best effort inquiry into the actualities of a situation, inquiry that is explicitly enough systematized in sampling, execution, and analysis to be replicated; exactly enough circumscribed to be extended; and factually enough supported to be verified . . . With RAD methodology, data do not just lie there; they are potentialized” (201). By identifying clearly characterized challenges and potential solutions, RAD studies can offer mechanistically specific data for positively influencing the development of writing centers at community colleges. The methodology is both universal and local; combining experimental rigor with questions that suit specific institutions and circumstances allows community college (CC) writing center administrators “to justify their programs and budgets to educational administrators and faculty across the disciplines who expect research‑supported evidence” while also supporting a pertinent “best practices” approach to assessment (Driscoll and Perdue 35). As Clint Gardner & Tiffany Rousculp suggest, community college writing centers “have specific challenges that a director must address . . . [that] relate to their two‑year only status, open enrollment, and the mission to reach out into the community” (136). Additionally, peer tutors, who are absent from community college writing centers far more frequently than at four-year college writing centers, are, when present, a vital resource for acting as ambassadors to the student population and also for enacting a RAD research program. This study recruited peer-tutors-in-training to co-develop a survey on assessing student perceptions of the writing center, utilizing RAD methodologies.

Our survey aimed to answer these questions: What do students know about the writing center? What makes them likely to be attracted to its services? Are students developing as they attend sessions, and, if so, at what point in the visitation cycle? The project was conceived in response to the findings and frustrations of students in the tutoring practicum and their desire to do better outreach and increase student use of the writing center’s services. Informal questioning and observing gave way to a more methodical and replicable experimental method: a survey with Likert scale, dichotomous (true/false, yes/no), scaled, and open-ended qualitative questions (see Appendix for survey). The survey utilized a mixed-methods approach that combined quantitative and qualitative questions. Qualitative responses help to place a narrative structure onto an otherwise numerically‑driven research project and offer justification for wide‑sweeping changes that an audience will, perhaps, innately understand and sympathize with more than a numbers‑driven model of assessment and program implementation. Additionally, a mixed-methods approach can be coded and analyzed within a RAD framework; as Haswell suggests, “Numbers may assist but do not define RAD scholarship. That is why the definition avoids the term empirical, which has so often been used to set up false oppositions with terms ethnographic, qualitative, grounded, and naturalistic” (201). Even with adding qualitative questions to a survey, however, I want to stress here that quantitative data tends to attract upper administration and external funding committees, as narrative-based research is often too lengthy for full review (Lerner 106). Also, it is extremely challenging to collect enough qualitative data to provide a representative yet also generalizable set of conclusions about a large and diverse population.

Our research study aimed to assess the perceptions of the Bristol Community College (BCC) Writing Center within the general student population. The study occurred over two years, during which time large-scale policy and staffing changes were made. While the study’s development was influenced by the local context of the institution (open-access), and the manner in which peer tutors were trained (via a required course), many of its features can be replicated at other research sites. The following features are replicable:

This study assessed the perceptions of students who had attended and had not attended the writing center, previously.

This study recruited student researchers to disseminate the survey and code the survey results.

This study was conducted using pen and paper (rather than electronic surveys).

This study utilized multiple research sites, both within and outside of classroom spaces, and across the disciplines.

Many of the study’s dissemination features were crafted with community colleges’ unique and heterogeneous populations in-mind. Aware that CC students have distinctive study, work, and living habits, as well as varying financial circumstances, the student researchers and I realized that many conventional surveying methods that four-year college writing centers tend to use, such as exit surveys and electronic surveys, would not accurately sample our student population or their knowledge of the BCC WC. Traditionally low WC attendance—by percentage of the overall student population—initially influenced our decision to recruit and survey students who had never attended alongside those who had. Lack of technology access—because of skill, age, or financial situation—affected why we chose pen and paper dissemination. Low student involvement with on-campus activities and time spent on campus (“car to classroom” habits) influenced our decision to survey both within and outside of a classroom setting. Thus, jettisoning our assumptions about the habits and motivations of the student populations that do and do not attend two-year college writing centers allowed us to capture rare data on an under-studied population and their writing confidence, writing knowledge, and knowledge of our writing center’s mission and support.

A main impetus for this project was the lack of research in our field on the habits, motivations, and perceptions of students who do not attend writing centers. Widely surveying a student population ought to be the “gold standard” of the field’s methodological approach to studying habits and perceptions within writing center contexts. Those that refuse to attend a writing center can act as a control group for researchers to compare with students who do attend the writing center. Another reason to include this population in writing center assessment is that our field is predicated on the claim that writing centers “help all writers at all levels;” whereas, in reality, colleges and universities are lucky if they see 30% of a student population. At BCC, the number was even lower, around 25%. If we fail to study the non-attendee alongside the attendee, we hazard losing a valuable demographic in our assessment. Of course, administrators also often argue that the number of students who come through a service’s doors is a measure of programmatic success, thus studying the habits and perceptions of those that do not attend a writing center might contribute to an increase in usage rates. To complicate this assertion, however, the findings from this study demonstrate that single-users and repeat-users of the BCC WC might have very different motivations for attending, and very different thresholds for improvement. The number of unique clients (proportionate to overall percentage of enrollment) might not be the best way to measure a service’s impact on student learning. In fact, measuring clients’ long-term engagement with writing center support results in far more robust data on student learning and confidence development and might be another key hallmark of effective writing center support. Without studying those who do not attend the writing center, it is difficult to know what, if any, effect it has on attendees.

method

Class description. English 262: “Tutoring in a Writing Center Practicum” met once a week for two hours and forty minutes over a fifteen‑week semester in fall 2014 and fall 2015. In the first year, there were fourteen enrolled students; in the second year, there were eight enrolled students. The course had a mixture of honors and non-honors students, although it was listed as a course within the Commonwealth Honors Program. Initially, the course required the completion of common ethnographic activities, including: participating in a session at the writing center, observing a tutoring session, conducting a site evaluation, and tutoring a client while under observation. In writing centers that run smoothly, such activities should not prove to be a challenge for students to conduct. Unfortunately, during the fall 2014 semester, students struggled to complete these tasks in a timely manner. Although they did not realize it, some of the issues stemmed from previous mismanagement and lack of training which affected students’ ability to complete their ethnographic research. Clients did not show up for their appointments and, when they did, the sessions were over well in advance of the 45-minute allotment, because the tutors were directive and provided line edits, or because clients required to attend were resistant to engaging in the session. So, my students could not conduct complete observations and tutorials!

Developing survey. From observing these systemic issues, my students concluded that if they struggled to utilize the writing centers’ services with anything akin to ease, other students were probably experiencing similar difficulties. They started asking me data-specific questions about the BCC Writing Center: Do students know that we have a writing center (or four, actually)? How many students attend the WC each semester? How many attend multiple times in a semester? Do students feel that the writing center helps them to develop as writers? Do students know what a writing center does? These questions, and dozens more, guided the development of our survey. Together, we brainstormed a number of questions that we felt would improve the brand, marketing, and quality of the BCC WC’s services. These questions developed into a survey that we then shared with our Institutional Review Board (IRB). In class, we discussed the history of human subjects testing (Stanford prison experiment, Milgram study, Tuskegee study), and how to conduct ethical and rigorous research. We workshopped our questions to alleviate selection bias and potential confounding variable, and we met with IRB to learn about how to conduct survey work methodically and sample respondents randomly. We also discussed the importance of statistical significance and the need to replicate, as closely as possible, the way in which we approached each potential survey participant utilizing tools such as a script and anonymous survey coding system. The resulting survey data informed the BCC WC’s marketing, training, and assessment plans; it also helped me, as director, to demonstrate effective best practices within a community college writing center, when most “best practices” are based on four-year college writing centers.

Our coding system was reproduced on the back of the survey and included the surveyors’ initials, the date and time, the campus, and the specific location/class. We strategized first to hand out surveys in public spaces, such as the library, the cafeteria, the parking lot, the study and computer lounges, and the corridors of academic buildings. After our initial survey dissemination in these public spaces, we then identified academic disciplines (including humanities, STEM, social sciences, business/management, and art) and randomly selected classes for students to survey in each discipline. As students learned to survey other students, we discussed the importance of random selection of candidates, both in class and in public spaces. Because successful students tend to follow similar patterns of behavior (another finding that our survey revealed), such as spending more time on-campus outside of class, it was imperative that students did not simply ask their friends to fill out the survey. In class, we discussed the importance of respectfully approaching strangers, and the need to sample a diverse group of students; that is to say, not to assume people were students or instructors based on their age, as 53% of BCC’s student population was over 21, and the average age of an enrolled student was approximately 29-years-old (“Bristol Community College Fact Sheet”). In all, we received 499 unique responses for 2014 and 366 unique responses for 2015, totaling 865 responses.

Preparation of surveys. Our project was conducted anonymously and automatically coded numerically, when it was transferred from paper to electronic storage. The data was entered by multiple student researchers and initially stored in Google Docs, which is a free resource for aggregating data electronically after conducting large-scale paper survey work, although some institutions have preferences for how data is housed electronically. Qualtrics, RedCap, and Box are other programs where data may be securely collected and/or stored. All paper surveys were stored in a locked cabinet to which only I had access. Checking with IRB before housing large data sets is imperative—some colleges have very stringent rules on what types of data storage platforms to use, while other colleges might not have a stand-alone IRB. Similarly, some institutions cannot afford fee-based programs like Qualtrics or data storage sites like Box. Data management guidelines can vary widely from institution-to-institution.

To avoid analytical bias, surveys with inconsistencies were removed based on four different criteria. First, surveys that had more than three blank fields were removed. Next, surveys in which responders claimed to attend sessions at the WC daily (the WC limited students to two sessions per week) were removed. Surveys that responded “no” to “I know where the BCC WC is located,” but also responded positively to the attendance question, were also removed. The final surveys eliminated were those that answered “no” to “I have been to the BCC WC” but also reported that the respondent goes once a semester or more often. Those that answered “yes” but reported that they never go to the BCC WC were included in the analysis, because they could either go less than once a semester (over the time they are enrolled at the college) or have attended in the past but never returned to the WC. Approximately 7% of responses each year were deemed incomplete based on the parameters identified. In a total of 810 surveys, 462 from 2014 and 348 from 2015 were included in final analysis.

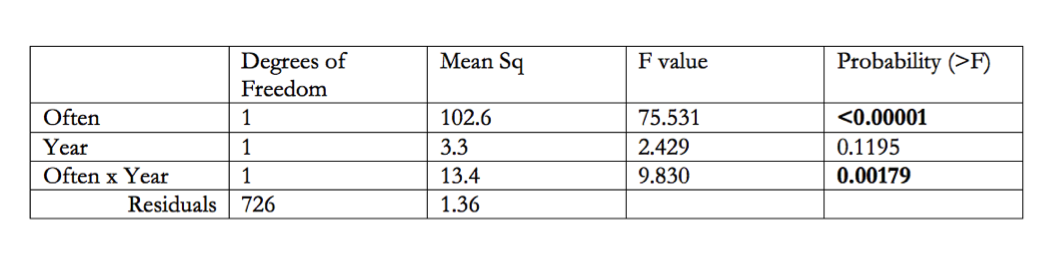

After surveys with multiple inconsistent responses were removed from the data set, a new variable, “WC knowledge,” was calculated using the three true‑or‑false questions—Questions 2, 3 and 4—and Question 6 (see Appendix), which were added together as a knowledge score. A correct answer was given a score of 1 and an incorrect answer was given a score of 0. Each student was assigned a knowledge score ranging from 0 to 4, where 0 means they answered none of the general WC knowledge questions correctly and 4 means they correctly answered all questions regarding WC general knowledge.¹ Both the knowledge score and Question 11, about writer development, were analyzed using an ANOVA in R Studio (v.0.99.491).²

results

The effect of sweeping policy changes and the introduction of peer tutors to the staff was studied from the perspective of both general knowledge about the writing center, and students’ self‑perceptions regarding their development as writers. In other words, we wanted to know if students’ knowledge scores improved from 2014 to 2015 and if students’ self-assessment of their development as writers changed from 2014 to 2015. Overall, the message of the WC was better communicated to clients after policy, training, and staffing changes were made post-Fall 2014. Students who attended the writing center in 2015 better understood the term edit and consistently reported that the WC helped them develop as writers.

Overall Knowledge of BCC Writing Center Policies and Locations

However, the overall average knowledge score did not change between both years, remaining consistent at 2.45, demonstrating all students’ moderate knowledge of WC policies and locations (see Figure 1 in Appendix). The average student who goes to the WC at least once a semester, however, has a higher knowledge score for the practices and location of the WC compared with those who never use the BCC WC. Students who do not report attending the writing center have moderate knowledge (2.22) of the BCC WCs’ policies and practices. In contrast, any student that attends a BCC Writing Center, regardless of the frequency with which they attend, has a cumulative score of approximately 2.90. We can further break down these averages to see where misinformation about the WC is most common.

One major finding of this study is that all students seem to have good semantic knowledge of the BCC Writing Centers; most students know there is a writing center on every campus and they know where a WC is located. They are confused, however, in their procedural knowledge of the writing center, such as whether or not they can drop off papers and whether or not tutors edit their papers (see Figure 1 in Appendix). The highest overall knowledge scores are among those who attend the WC, and the most correct responses are regarding location and availability on all campuses. Those that attend are more likely to get these two questions correct (see Table 1 in Appendix). The only question within the knowledge score that changed between 2014 and 2015 was whether the WC edits students’ papers. In 2014, students’ procedural knowledge was incredibly limited and erroneously focused on the idea that tutors would edit their papers. We believe this is because of the directive tutoring model that professional and faculty tutors utilized in the WC up to that point. To shore up this claim, in 2014 more students who attended the writing center thought that tutors edit their papers compared with those students who never attended the WC, which points to how tutors and clients engage with writing in-session. Confusion over the definition of “edit” might have also influenced responses. In 2015, however, after the introduction of non-directive tutoring models in training sessions, as well as the reintroduction of peer tutors, the pattern reversed; students who attended the WC were less likely to report that the WC edits their papers (see Figure 1 in Appendix; see Table 1 in Appendix for attendance by year interaction). Thus, the initial directive tutoring model profoundly affected students’ perceptions of the WC as an editorial site, rather than as a site that fosters meta-cognition in reading and writing through student-directed learning practices.

These data suggest that knowledge about the writing center’s existence and locations are well advertised but there is a disconnect between the writing center’s policies and mission and students’ perceptions of what the writing center does; even among students who regularly utilize the writing center’s services. While in 2014, the policy response results were in line with the center’s older model of offering more directive feedback and editorial support via an all-faculty professional tutor staff, widespread changes to tutor training and staffing models in 2015 (such as the addition of peer tutors) contributed to an increase in the frequency of correct responses to the editing question. The BCC tutors were trained to no longer rely on directive feedback and editor personae in their sessions with students, which is reflected in the change in response, between both populations, to the editing question (as well as the coded qualitative responses, which are unpublished data).

Following incorporation of non-directive tutoring methods, students were more likely to report that the WC helped them develop as writers.

While knowledge scores are important when considering messaging, mission, and performance of services, an even more important question to address is whether the WC is having an impact on students’ writing abilities. The flipside of positive impact is negative impact—whether or not students are dependent on the WC—which, in data, would show reportage of little to no development combined with an increase in writing confidence and/or an increase in attendance. To better understand how students utilize the BCC WC, and are affected by engagement with the WC, we asked them if they agreed that the WC helped them develop as writers. There was a stark difference between the years, with 2015 showing a stronger and more consistent relationship between number of visits to the writing center and positive responses to the writing development question (see Figure 2 in Appendix).

Prior to the start of the Fall 2014 semester, and for a number of years, there was no consistent staff training. This is reflected in the inconsistency of students’ experiences with the writing center. Previously, we discussed how the former directive tutoring method shaped students’ perceptions of the WC as an editorial space. Student responses to the question about editing were then re-shaped by non-directive training initiatives and changes to the tutor-staffing model in 2015. This pattern is even clearer when comparing how students reported writing development by session attendance in 2014 and 2015 (see Figure 2 in Appendix). In 2014, there is a very weak relationship (R2=0.09) between how often a student attends the writing center and how much they feel they are developing as a writer; this is due to the large spread of individual responses (not shown). Comparing the means and slope (0.4), which is relatively flat, demonstrates that there is only marginal improvement in development based upon how often a student attends the writing center. This could be due to inconsistent tutoring, or confusion about the policies and mission of the service, which might have led students to use the WC for a range of reasons, some passive (required to attend for class, expects line editing), some active (desire for feedback, expects to collaborate), thus leading to inconsistent student outcomes. Looking across the years, we see that how often a student attends the writing center is a strong indicator of how a student feels about the development of their writing process (see Table 2 in Appendix). There is also a significant interaction between the survey’s dissemination year and how often students attend the WC, meaning that there is a difference in the relationship between how often a student attends the WC and the improvement they feel that they are making. In 2014, students could attend 6 sessions and not report improvement in writing skill; whereas, in 2015, students attending far fewer sessions (3) reported improvement in their writing.

2015, then, was a far more consistent year, possibly due to the extensive training of the staff in Fall 2014 and Spring 2015 and the introduction of trained peer tutors. Now, there is a very strong relationship (R2=0.75)³ between how often a student attends the WC and how much they feel they have developed. Furthermore, comparing the means and slope (1.2), which is more acute than 2014, reveals that it takes students fewer attended sessions to report positive outcomes. Interestingly, students are ambivalent or negative about their development as writers for the first 2 appointments at the WC; however, from 3 appointments onward, students reliably report that the WC is having a positive impact on their development. Perhaps this gap in positive impact reportage for 2 or fewer sessions is due to student frustration with a novel (i.e. non-directive, student-led, meta-cognitively focused) learning model and a desire for clearer rules and guidelines that are in line with previous educational experiences. The 2015 results, however, are in stark contrast to 2014, where students could attend the WC 6 times, on average, and still strongly disagree with the assertion that the BCC WC is helping them develop as a writer. It is possible that a change between 2014 and 2015 in mission from remedial support to non-label-focused and process-oriented support also affected students’ assessment of their development and writing confidence (unpublished data) (Mohr 2). Overall, the new training changed how tutors interacted with students—active learning models consistently lead to sessions that are more successful in that students feel that they are developing as writers.

conclusions

There are a number of factors to consider before implementing a longitudinal RAD study. The first concerns planning, the second concerns sample size, and the third concerns the long-term effects that result from such work. I address each of these, in turn, below.

Implementing a longitudinal study on the same schedule as the tutoring course helped to frame an approach by establishing a consistent sampling period (fall semesters) and a consistent investigator pool (student researchers from the course). Even if a given CC’s WC does not have a tutor-training course, a similar project is still possible. Recruiting students from across the disciplines and in general education courses (such as introduction to psychology or statistics) to conduct similar survey work as part of an experiential learning component or final project for a class is another possible model. Alternatively, requesting that peer tutors engage in this survey work during “no-shows” and other types of downtime in a writing center also makes this work feasible. In short, recruiting student researchers is crucial to conducting “on-the-ground” surveying and response coding; this model can also shape a research profile for a CC WC in a relatively short amount of time while building a community of practice around which student researchers and, later, peer tutors, feel empowered to conduct their own studies.

However, it is imperative to ensure a large enough sample size for similar RAD projects; this is a direction that the field ought to move towards, similar to prioritizing replicability in survey work and surveying non-attendees alongside WC attendees. For community colleges, however, which have such varied population demographics that often do not align with four‑year colleges’ WEIRD (Western, Educated, Industrialized, Rich, Democratic) student population, it is necessary for scholars in the field to account for such variations in their experimental design and adjust-up sample size accordingly (Henrich et al.). The more variation there is in a population, the larger a survey’s sample size needs to be in order to detect differences between groups. The threshold to significance might be much smaller at schools that have less variation in student population demographics, and a more contained campus location. Still, it is imperative that we capture as wide a cross-section of student populations, as it offers a clearer picture of the challenges and needs of individual student groups within the general population. Surveying those who do and do not utilize writing center services is one such approach to achieving significance. The types of students that attend community colleges are far from uniform, as their life experiences, socioeconomic statuses, ages, and other demographics suggest. Studying their perceptions of academic support for writing will certainly yield different results from traditional college students.

Yet, researchers at two-year colleges are not necessarily constrained by a “uniqueness factor” that renders a study’s results inapplicable to other institutional contexts (Driscoll and Perdue, “RAD Research” 121). Instead, local replicable research projects are critical to revealing the challenges that two-year college writing centers (or writing centers at any institution) face and provide “evidenced based practices” to improve center efficacy and demonstrate what does and does not work (Driscoll and Perdue, “RAD Research” 122). Replicability hinges on keeping one’s investigator training, respondent recruitment, sampling methods, sample size, and coding methods consistent. Also, running multiple “trials” of a study helps to determine change over time, effect size, and what results are applicable externally; all of which systematically advances writing center research and assessment.

I hope to instill in community college WC directors (and directors at all levels of higher education) a sense that conducting RAD research is not only tenable but also critical to our work in the profession. Implementation is an important aspect of the assessment process, as Joan Hawthorne suggests when she writes “Moving from collection of data to actual use requires attention to two aspects of the research assessment process: analysis of findings and implementation or ‘closing the loop’” (243). In addition to justifying our budgets, RAD research can provide granular data that two-year college writing center administrators can use to inform targeted marketing or specialized training and services, all of which is critical, given the very diverse and often time‑strapped population of students attending two-year colleges. At the macroscopic level, RAD research methods and replicability can provide a map for other institutions to conduct similar research. Perhaps the most attractive feature of this research method, however, is that it can determine whether students who do attend the writing center are learning and developing as writers. Perceptions about writing centers, ultimately, are present wherever writing centers are located, and it is often hard to change those perceptions because they can be so ingrained in an institution’s culture. Examining how perceptions influence a student’s decision to attend or not attend a writing center is complicated by the fact that most students are probably unaware of what motivates them in one direction or the other; unless, of course, they are required to attend! Our study revealed that students are more likely to attend the writing center if they have the following traits: moderate writing confidence, receptivity to learning new writing strategies, and moderate engagement with non-directive tutoring processes. Once students attend the writing center, they are more likely to continue attending if they report positive self-assessment of their development as writers. Studying the perceptions and attitudes of writers helps us to understand a decision that might seem spontaneous and happenstance; walking through a writing center’s doors. But to keep them coming back, it’s important for students to have positive experiences with writing and a belief that they are developing as writers; this, of course, takes time and patience on the part of the writer, as well as the tutor. Our finding that students’ positive self-reportage of writing development requires a fairly high threshold of engagement (3 or more sessions) with writing center services runs counter to the administration’s focus on unique student interactions—rather than continual student attendance—as a marker of success for our center. This finding also challenges another assertion I often heard (and assessed in the survey) that requiring students to attend a session for class credit makes them more likely to continue attending. When the threshold for positive development is 3 or more sessions, requiring students to attend just once potentially warns them away from the writing center. Findings that were not included in this paper (but will be forthcoming in future articles) further support that required attendance discourages a large percentage of students from using the writing center again. Thus, RAD research can confirm or disconfirm our hunches and hypotheses and provide us with much-needed evidence for or against our most cherished (or hated) practices.

acknowledgement

Thank you Dr. Katherine R. O’Brien (OSU) for support with data analysis and for providing figures in R studio.

notes

1. All other coding and scoring metrics are not pertinent to this discussion; however, I am willing to share these methods with those interested in conducting a similar project in the future.

2. Data will be uploaded on Praxis Research Exchange.

3. Generally R² values greater than 0.5 are considered significant when studying human behavior.

works cited

“Bristol Community College Fact Sheet.” Bristol Community College, 2015. http://www.bristolcc.edu/media/bcc-website/about/factsheets/FactSheet2016_left_centered.pdf. Accessed 1 May 2017.

Driscoll, Dana Lynn, and Sherry Wynn Perdue. "RAD Research as a Framework for Writing Center Inquiry: Survey and Interview Data on Writing Center Administrators' Beliefs about Research and Research Practices." The Writing Center Journal, 2014, pp. 105‑133.

---. "Theory, Lore, and More: An Analysis of RAD Research in "The Writing Center Journal," 1980–2009." The Writing Center Journal vol. 32, no. 2, 2012, pp. 11‑39.

Gardner, Clinton, and Tiffany Rousculp. "Open Doors: The Community College Writing Center." The Writing Center Director’s Resource Book. Ed. Christina Murphy and Byron L. Stay. Mahwah, NJ: Lawrence Erlbaum Associates, Inc., 2006, pp. 135‑145.

Haswell, Richard H. "NCTE/CCCC’s Recent War on Scholarship." Written Communication vol. 22, no. 2, 2005, pp. 198‑223. Accessed 1 Nov. 2016.

Hawthorne, Jean. “Approaching Assessment as if It Matters.” The Writing Center Director’s Resource Book. Ed. Christina Murphy and Byron L. Stay. Mahwah, NJ: Lawrence Erlbaum Associates, Inc., 2006, pp. 237‑248. Accessed 1 Nov. 2016.

Henrich, Joseph, et al. "Most People are not WEIRD." Nature vol. 466, no. 7302, 2010, n.p. doi:10.1038/466029a. Accessed 1 Nov. 2016.

Lerner, Neal. “Of Numbers and Stories: Quantitative and Qualitative Assessment Research in the Writing Center.” Building Writing Center Assessments that Matter. Edited by Ellen Schendel and William J. Macauley Jr., Boulder, CO: UP of Colorado, 2012, pp. 106-114. Accessed 1 Nov. 2016.

Mohr, Ellen. "Marketing the Best Image of the Community College Writing Center." Writing Lab Newsletter vol. 31, no. 10, 2007, pp. 1-5. Accessed 1 Nov. 2016.

Schendel, Ellen and William J. Macauley, Jr. Building Writing Center Assessments that Matter. Boulder, CO: UP of Colorado, 2012.

appendix

Figure 1: Summarizes the four knowledge questions and their response scores, as well as the aggregated knowledge score for all four questions (left). All responses are broken down by survey year and respondent type (WC attendees and non-attendees), illustrating that respondents had generally strong semantic knowledge of BCC WC’s existence and location; however, procedural knowledge about policies (editing and paper drop-off) was weak.

Table 1: Summarizes the results of five ANOVAs showing the effects of attendance and year of the survey on mean knowledge scores. This table illustrates that those students who attend the writing center, in either year, have more accurate overall knowledge scores and individual question scores for the questions regarding location, drop-off policy, and overall knowledge. However, there was a shift from 2014 to 2015, whereby students who attend the writing center are more likely to demonstrate correct knowledge about the writing center’s editing policy.

Figure 2: Summarizes 2014 and 2015 student development as writers, as compared with number of sessions attended. From 2014 to 2015, the mean number of sessions attended decreased while students’ self-assessment of their development as writers increased.

Table 2: Summarizes the results of an ANOVA showing effects of attendance and year on perceived development. This illustrates that the more students attend the writing center, in either year, the more likely they are to feel that the writing center has helped them to develop. Furthermore, the significant interaction of year, and how often a student attends, highlights the different relationship between attendance and perceived development in the two years. In 2014, there is a weaker relationship between how often a student attends and how much they feel that they are developing as a writer; however, by 2015, this relationship is much stronger. Thus, those who attend more are predictability likely to feel that they have developed as writers.

Writing Center Survey

Please take a moment to answer the following questions to help Bristol Community College’s Writing Center (BCC WC) improve its services. Circle the answer that is correct for you.

1. How often do you use a BCC Writing Center?

Daily Weekly Monthly Once a Semester Never

2. There is a BCC Writing Center on each campus. True False

3. Students can drop off a paper to the BCC WC. True False

4. The BCC WC edits my paper. True False

5. I have been to the BCC WC. Yes No

6. I know where a BCC WC is located. Yes No

7. A BCC faculty member required me to attend the BCC WC. Yes No

8. Being required to attend the BCC WC benefitted me. Yes No

1‑Stongly Agree 2‑Agree 3‑Neither Agree nor Disagree 4‑Disagree 5‑Strongly Disagree

9. I consider myself a strong writer (1‑5): _____

10. I would like to learn new strategies for writing (1‑5): _____

11. The BCC WC has helped me develop as a writer (1‑5): _____

12. If you have used the Writing Center, please write two things that you learned:

13. Please list two or more services that you want the BCC WC to offer:

Thank you for your feedback!

Surveyor’s Initials: _________

Campus: _________________

Date and Time: ____________

Location (e.g. Classroom, Cafeteria, Library, etc.): _____________________________